Besides manufacturing tolerances becoming increasingly tighter many fabricators are being held to more stringent measurement guidelines.

Increasingly we hear of the application of Gage Reproducibility and Repeatability studies being required before a particular gage or measuring system can be “trusted” to perform the measuring task.

In short the GR&R confirms that operator, part, environment standards and gage precision uncertainties do not in total exceed a specified percentage of the total part tolerance.

Since the GR&R study takes some doing, as numerous parts and different operators must be collected and data massaged thru a formula, many find helpful a new gaging Rule of Thumb.

The old Rule of Thumb was that a gaging method should provide resolution of 10% of the total tolerance. The old rule never took into consideration all the other variables involved in a measurement. Today, typical GR&R requirements demand a 10% uncertainty of measurement due to all the known variables.

New GR&R Rule of Thumb

Typically when the raw repeatability equals 3% of the total bandwidth tolerance the gage or measurement system will provide a 10% Gage R&R. If a 20% Gage R&R is allowable than the raw repeatability may be as high as 6% etc.

Example: Nominal: 1.000″ with a +/- .005″ Tolerance (.010″ Bandwidth)

A 10% allowable Gage R&R specification would equal .001″ (i.e. 10% of Bandwidth)

If we had readings of: 1.0001, 1.00005, .9999, 1.0002 – We would have a range of .0003″ (.9999″ to 1.0002″ = .0003″ range). This would be acceptable as it equals but does not exceed 3% of the Bandwidth tolerance. If the range was .0004″ (4% of the Bandwidth) the Gage R&R when finally run through the formula will likely exceed the 10% requirement.

Of course, if a 20% Gage R&R is acceptable than using the same .010″ Bandwidth you can likely be comfortable with a repeatability range of .0006″ and still be safe when the final calculations are completed. Extrapolating one can than readily imagine that at 30% Gage R&R 9% or in this case .00009″ is an acceptable range of measurements.

Most GR&R requirements specify 10% while 20% is a stretch, 30% is very rarely permitted.

In no way does the Rule of Thumb eliminate the need for proper GR&R studies, it is purely a quick overview of a particular gage or systems capabilities for an intended purpose.

GR&R procedures allow for separation of operator reproducibility from gage error. This divides the “blame” for the uncertainty, but in reality the gage is generally saddled with the full brunt of the lack of adherence to the desired specification without regard to all of the variables that affect the final outcome. The very term GAGE R&R seems to place the blame for whatever the problem directly on the gage.

SWIPE

SWIPE is a mnemonic which stands for the following influencers of total measurement performance:

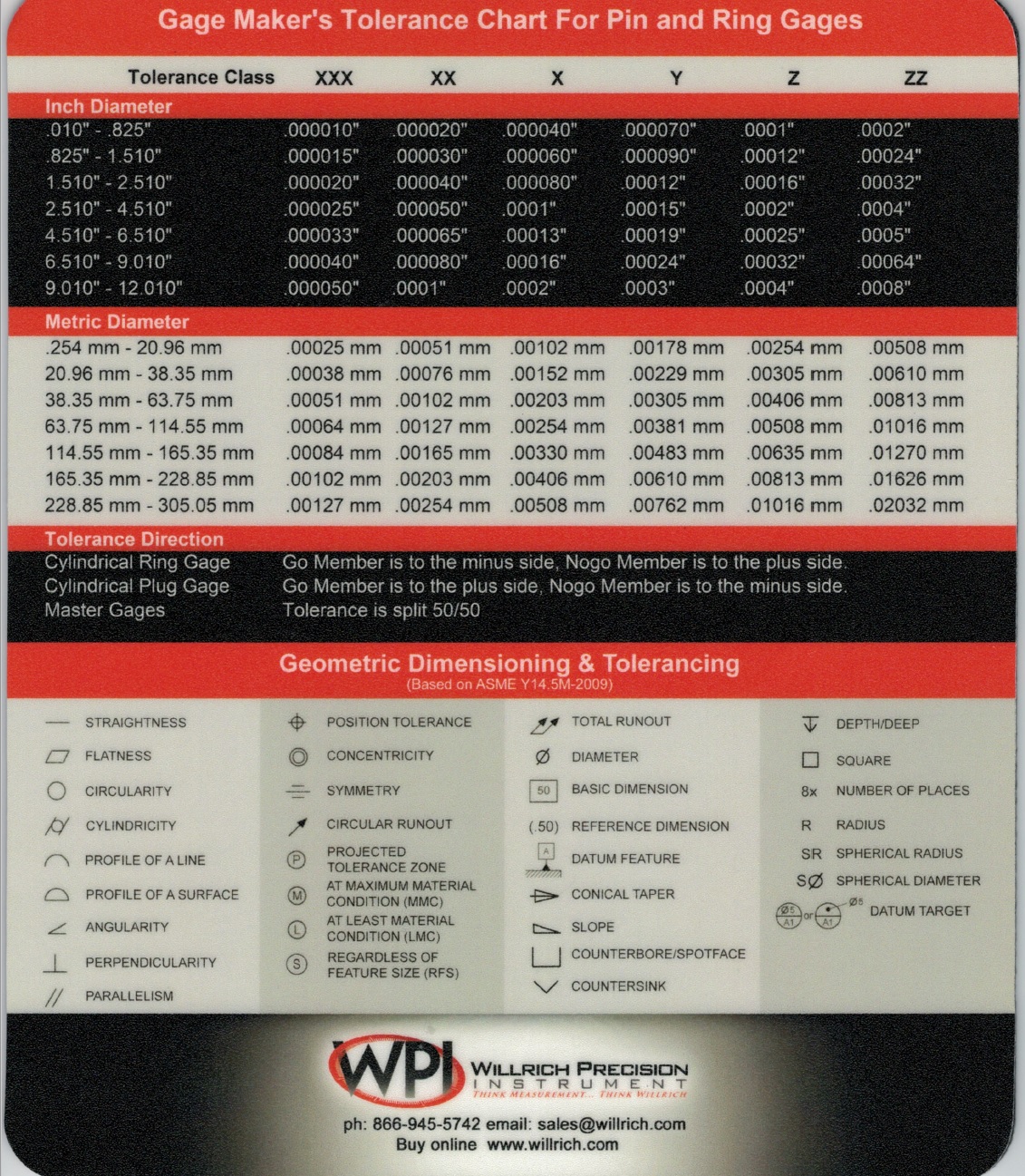

S- The Standard, is it certified and when, is it the proper class. For example in setting a bore gage to gage a 1″ hole having a .0005″ Bandwidth tolerance, if one were to use a class Y tolerance master, the uncertainty of the master alone could be as much as .0001″ which is 20% of the total tolerance of the hole to begin with. The roundness of the master may be up to .00005″ which is already 10% of the Gage R&R.

W- The Workpiece, every part varies, some more than others. Are the R&R operators aware of the variation within a part? Does the part have intrinsic taper, out of roundness conditions, surface finish variations etc. that can affect the measurements. Just by not making measurements in the same place or zone on the part repeatedly can cause the R&R to suffer significantly. A .0001″ out of roundness condition can consume 20% of the total part tolerance using the example above.

I- The Instrument itself obviously has linearity, and repeatability characteristics. Whatever they may be, clearly they add to the gaging uncertainty. In addition certain instruments are more prone to operator loading, use and care.

P- The Personnel and their ability to adapt the gage to the part is an ever important factor. Surely the gages vulnerability to operator influence can be considered the gage’s fault. However, one should not discount the variation in touch and experience that the operator brings to these tests. With some operators and their influence there may be no gages or inspection equipment made to perform the measuring task at hand. Surely an enigma, but best handled when best understood.

E- The Environment. Parts that are dirty, oily, or hot or even cold are poor candidates for R&R testing methods. They may represent the real world conditions but offer no stable ground on which to buyoff on a gages ability.

CONCLUSION

So there you have it, the SWIPE scenario. The answer may very well be that considering all of the variables, the only one that can be rectified is the gage’s intrinsic accuracy and repeatability. In this case it becomes necessary to obtain gages of a higher order. This may mean changing from Mechanically applied hand tools to Electronic or Air Gage tooling. These tools permit higher resolution and linearity and repeatability. They limit operator influence and offer output to SPC and signaling modules. The cost may increase but the value per item measured makes these types of tools irreplaceable.

Gage R&R, while an important measure in the measure of the measurement system requires careful consideration in its application.

The entire GR&R issue raises a red flag on many accepted measuring methods. What was entirely acceptable before GR&R requirements is at once unacceptable.

An example is the enormous usage of plug gages and dial bore gages to measure hole size. A simple matter one would think. But considering a .250″ hole with a + or – .0005″ tolerance 3% range in raw repeatability equates to .000030″ or less than a micron. There are no dial bore gages capable of this level of raw repeatability. Most have dial gage resolution if .0001″ at best. Why right there in resolution alone 10% of the tolerance has been met.

WANT MORE INFORMATION OR TO FURTHER DISCUSS, CALL: 866-WILLRICH

FILL IN BELOW TO RECEIVE YOUR FREE MOUSEPAD OF GAGEMAKERS TOLERANCE AND GD&T SYMBOLS