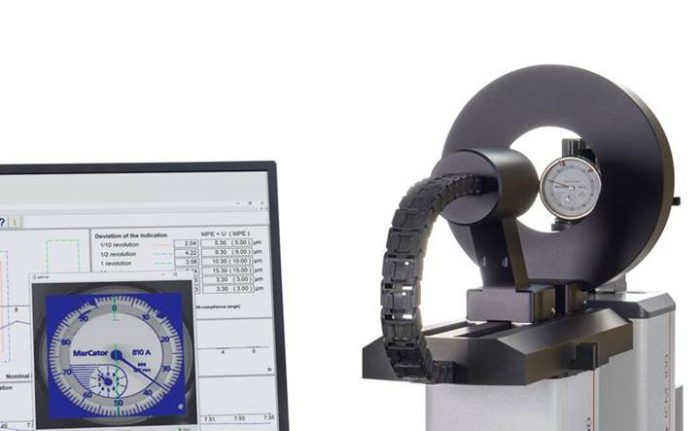

Manufacturers continue to push for tighter tolerances, faster throughput, and greater process control. Traditional quality inspection often struggles to keep pace with these demands when measurement systems sit far from production. Moving parts off the floor introduces delays, handling risk, and missed opportunities to correct issues early.

Modern shop floor CNC coordinate measuring machines solve this problem by bringing high-accuracy inspection closer to production. Systems designed specifically for harsh environments now deliver reliable results without sacrificing speed or precision.

Built for Real Production Environments

The Mitutoyo MiSTAR Series CNC CMM demonstrates how shop floor inspection has evolved. Unlike laboratory-only CMMs, MiSTAR operates directly on the production floor while maintaining stable measurement performance.

MiSTAR withstands vibration, dust, and temperature variation common in manufacturing facilities. Its rigid construction and sealed components protect sensitive systems from contamination. This design reduces downtime and minimizes the need for frequent maintenance, even in high-use environments.

Available in two sizes, the 555 and 575, the system supports a wide range of part dimensions without expanding its footprint. Manufacturers gain flexibility without sacrificing floor space.

Accuracy Across a Wide Temperature Range

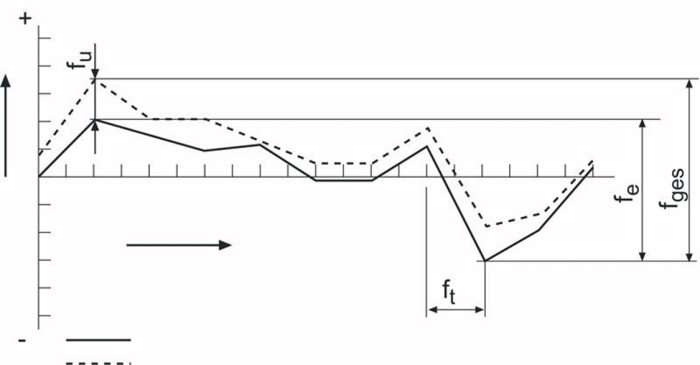

Temperature variation remains one of the biggest challenges in shop floor metrology. Fluctuations affect material size, machine stability, and measurement repeatability.

MiSTAR addresses this issue with a market-leading temperature accuracy guarantee from 10°C to 40°C. Real-time temperature compensation continuously adjusts measurements based on ambient conditions. This capability allows operators to trust results without moving parts to climate-controlled rooms.

ABSOLUTE scale technology further enhances accuracy. The system eliminates homing routines after power-up and ensures stable position reference at all times. Operators can resume measurements immediately, improving efficiency across shifts.

Speed That Matches Production Demands

Inspection systems must keep pace with modern production lines. Slow measurement creates bottlenecks and reduces throughput.

MiSTAR delivers best-in-class drive speeds that support near-line and in-line inspection. Fast positioning and scanning reduce cycle times without compromising measurement integrity. Manufacturers gain quicker feedback and can adjust processes before defects multiply.

By placing inspection closer to machining and assembly operations, teams reduce part travel and handling. Faster inspection supports lean manufacturing goals and improves overall equipment effectiveness.

Simple Installation and Operation

Complex infrastructure often limits where traditional CMMs can operate. MiSTAR removes many of these barriers.

The system runs on standard 120V power and requires no compressed air. Its compact footprint allows installation in tight spaces near production equipment. These features simplify deployment and reduce facility modification costs.

Operation remains straightforward, even for users with limited metrology experience. The Quick Launcher interface streamlines program selection and execution. Clear workflows reduce training time and operator error.

This ease of use allows more teams to perform accurate inspection without relying on specialized staff.

Consistent Results with Minimal Maintenance

Maintenance demands often discourage shop floor measurement. MiSTAR’s contamination-resistant design and sealed guideways reduce wear and debris buildup. Fewer moving parts exposed to the environment translate into longer service intervals.

Combined with stable scale technology and automatic compensation, the system delivers consistent results shift after shift. Manufacturers maintain confidence in measurement data while controlling operating costs.

Supporting Quality Where It Matters Most

Accurate inspection close to production improves quality at every stage. Early detection prevents scrap, reduces rework, and supports continuous improvement efforts.

At Willrich Precision Instruments, we help manufacturers select and support shop floor metrology solutions that match real-world production needs. Our expertise spans equipment selection, calibration, and long-term measurement support.

Shop floor CNC CMM systems like MiSTAR enable accurate, efficient inspection where it matters most. By integrating measurement into production, manufacturers improve productivity while protecting product quality.